The cloud engineer was the bottleneck. AI made it the failure mode.

CEO & Co-founder at CloudBooster. 12+ years in cloud infrastructure. Writing about how cloud infrastructure gets built and operated in the AI era.

For most of the last decade, companies running serious workloads on the cloud have leaned on one role to actually operate it. Call it cloud engineer, DevOps, SRE, platform engineer. The titles drift. The job is recognizable: someone designs the change, evaluates it against security and cost and blast radius, approves it, applies it, watches what happens, and writes the next change when something drifts.

In the orgs that have this role, it's usually one of the more expensive engineering hires. Months to hire in the US. Spends a lot of its time mechanically applying policies that already live in someone's head. Often the bottleneck for product teams waiting on infra.

For a long time, that was tolerable. Change volume was bounded by how fast humans could write Terraform. The bottleneck and the author were often the same person, so the queue self-regulated.

AI broke that.

What changed in the last 18 months

Through 2025, we watched teams try the obvious thing: keep the cloud engineer, give them Cursor or Claude Code. We saw the same pattern repeat across teams we worked with.

Week one, the engineer reviews 3 AI-generated PRs a day instead of writing them. Productivity feels great. Week four, it's 10. Review still works but the reviewer is skimming. Week ten, it's 40 PRs a week, half from developers who never touched infra before, and the reviewer is rubber-stamping anything that passes CI. A few months in, the team finds a security group with 0.0.0.0/0 in production. Nobody remembers approving it. Git history says the cloud engineer did.

The reviewer didn't become careless. The volume outran human attention. PRs merge unread. The gate is still there on the org chart. It isn't there in practice.

Authoring infrastructure used to be the scarce resource. Now it's free. Reviewing is scarce, and reviewing scales worse than authoring because authors can parallelize and reviewers can't.

Why the role was always the wrong primitive

The cloud engineer was never the unit of value. The unit of value is the change: one modification to running infrastructure, from "I want a thing" to "the thing is running, governed, observable, recoverable." The cloud engineer was just the person doing all the steps of that lifecycle by hand.

What a cloud engineer does in a typical week: translate a request into a change, write the IaC, evaluate against security/cost/blast radius/compliance, get approval, apply under controlled execution, watch the result, notice drift, write the next change.

Most of those steps are policy application. Not judgment. Application. The policies exist. They live in a wiki, a runbook, a Slack thread, or the cloud engineer's head. The cloud engineer is the meat that turns "policy says X" into "X is applied."

Once you see the role this way, "AI assists the cloud engineer" stops looking like the long arc. The cloud engineer was an artifact of needing a human to apply policy at every step. AI doesn't just make that human faster. It changes whether the human needs to be the apply mechanism for the routine cases at all.

The new failure mode

The old failure mode was "we can't ship infra fast enough." Painful, but visible. You knew the queue was long because product teams complained.

The new failure mode is invisible. The PRs ship. CI is green. Six weeks later you find a bucket with public read access nobody approved, an IAM role with s3:* that should have been one prefix, an RDS instance two sizes too big because the prompt said "high memory," a security group with port 22 open to the world for a debug session in March.

None of these get caught at review because review is a stamp. None get caught by terraform plan because the IaC is syntactically fine. They only get caught by something that looks at the actual change against the actual account against the actual policy. That something used to be a senior engineer with context. At AI volume, it can't be.

What software-as-operator actually looks like

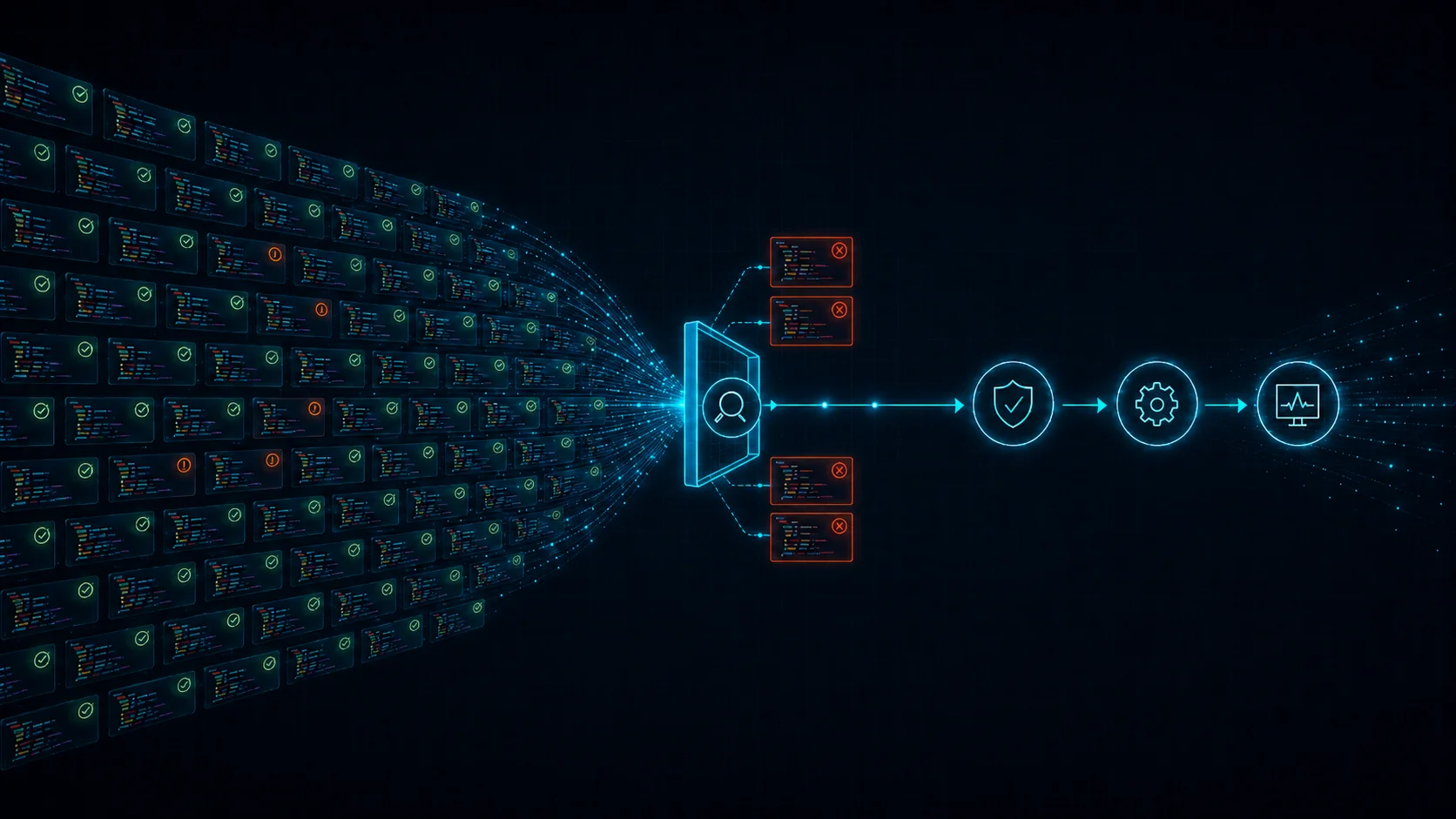

The replacement isn't "AI does the cloud engineer's job." That's the same role with worse judgment. The replacement is splitting the role into its parts and running each part with the right tool.

Authoring. What AI is genuinely good at. The model doesn't need to be careful because it isn't the safety layer.

Evaluation. Deterministic code. Rule engines. The customer's policies, encoded once, applied to every change automatically. Not LLM judgment.

Approval. Routed based on what evaluation found. Auto-approve when policies pass and risk is low. Human approver for residual judgment the policy graph hasn't absorbed yet. The human review surface starts large and shrinks every quarter as more policies get codified.

Execution. A controlled runner under the customer's IAM. One path every change goes through, regardless of who or what authored it.

Monitoring and remediation. Drift detection feeds back into authoring. The system notices state diverged from intent, drafts the change to reconcile, sends it back through the same pipeline.

This isn't a future product. It's how a senior cloud engineer already operates mentally. The difference is whether the steps live in one person's head or in software the whole team can use.

The honest version of the trajectory

No tool today fully replaces a senior cloud engineer. Not us, not anyone. What is true is that the trajectory is one-way.

Today: AI authors, deterministic engine evaluates against codified policy, human approves residual judgment, system applies and monitors. That's already two and a half of the cloud engineer's seven steps removed from human hands. Each quarter the policy graph gets denser. Each quarter the residual judgment surface shrinks. Drift remediation moves into software first because the pattern is well-defined. Capacity planning next. Incident response is the hardest and the latest.

The teams that get to the end of this trajectory first are the ones who started running cloud operations in software early, with a small enough team that the policy graph stayed coherent. The teams that get there last are the ones who hired a platform team in 2024, scaled it to 12 engineers, and now have to argue with 12 humans about whether the software should do their job.

Where to go from here

If your team uses AI for infrastructure today and the review queue feels OK, check back in a couple of months. Most teams we talk to find their change volume has grown several times over while the number their reviewer can carefully look at hasn't moved.

If you're already past that line, the fix probably isn't "hire faster." Hiring a cloud engineer in the US typically takes months, and the volume problem keeps growing in the meantime. The more durable fix is to move the policy application part of the job into software now.

That's what we built CloudBooster for. AI authors the change. Deterministic policy evaluates it. Approval routes based on residual judgment. Execution runs under your IAM. Drift feeds the next change back through the loop. The cloud engineer's job, unbundled and run in software. See how the lifecycle works.